The Unknown Unknowns Problem: Why We Can't Deploy Fully Autonomous AI Agents Yet

Our AI is 97% accurate, but we still can't remove humans from the workflow.

We’re stuck.

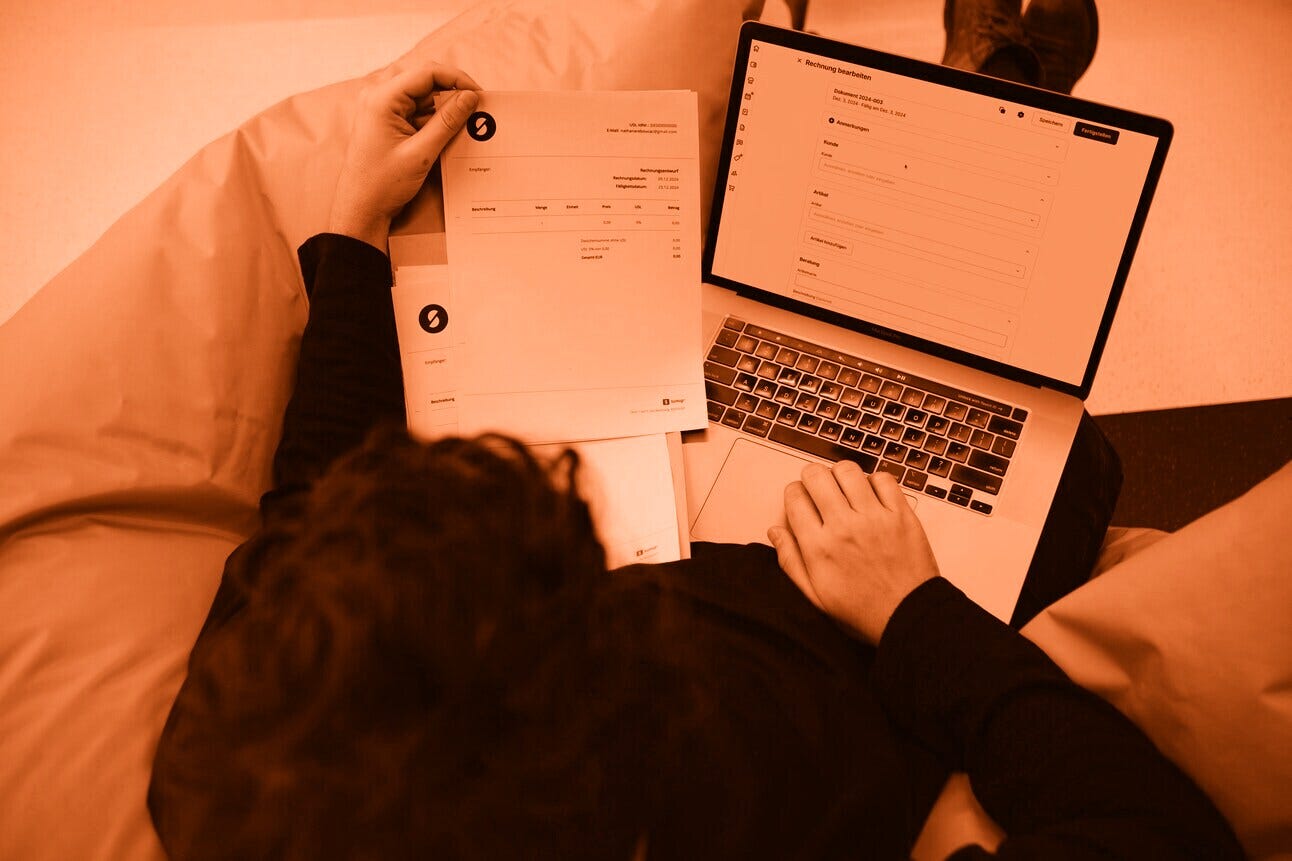

Our LLMs extract invoice data with 97% accuracy. They parse sales orders. They categorize transactions. They work.

But we can’t remove the human from the loop.

Not because the AI isn’t good enough. Because we can’t reliably detect when it’s wrong.

Here’s the problem: I ask an LLM to extract line items from an invoice. It returns seven items. How do I know there weren’t eight?

I ask it to pull buyer information from a sales order. It gives me a name, address, and tax ID. How do I verify it didn’t miss a secondary contact or a special delivery instruction?

I can’t. Not without manually checking the original document. Which defeats the entire point of automation.

This is the unknown unknowns problem, and it’s the bottleneck keeping us from fully autonomous agents.

The Confidence Score Illusion

The obvious solution is to ask the LLM how confident it is.

We tried this. The model evaluates its own response and returns a confidence score.

Sometimes it works. The model says “I’m 60% confident” and we flag it for human review. Good.

But sometimes the model says “I’m 95% confident” and it’s completely wrong. It missed a line item. It hallucinated a field. It merged two separate entries.

High confidence, wrong answer. That’s the nightmare scenario for autonomous systems.

When you’re deploying agents in financial systems or wholesale operations, you need 99.99% accuracy. Not because perfection exists, humans make errors too, but because that’s the threshold where the economics of automation actually work.

97% sounds good until you realize that 3% error rate means three mistakes per hundred invoices. At scale, that’s dozens of errors per day. Errors that compound. Errors that break trust.

And the worst part? You don’t know which 3% are wrong until someone manually checks.

The Autonomous Agent Paradox

Here’s what’s ironic: we have the technology to build autonomous agents right now. The LLMs are capable. The integrations exist. The infrastructure is ready.

But we’re all still running human-in-the-loop workflows.

Why? Because nobody has solved verification.

My clients don’t lack confidence in AI’s ability to do the work. They lack confidence in their ability to detect when AI screws up.

That’s a fundamentally different problem. And it’s blocking the entire industry from moving past assisted AI into truly autonomous AI.

What We’ve Tried

The standard approaches don’t cut it:

Confidence scores: As I mentioned, they lie. Models are confidently wrong.

Self-evaluation: Asking the same model to check its own work is like asking someone to proofread their own writing. They’ll miss the same mistakes twice.

Structured output validation: You can verify that the JSON is valid, that required fields are present, that formats match. But you can’t verify completeness. You can’t know what’s missing.

Deterministic checks: For some tasks, you can add logic. Invoice totals should match the sum of line items. Dates should be chronologically consistent. But for pure extraction? There’s no deterministic way to confirm you got everything without seeing the original.

We’re currently using confidence scores combined with human review. It’s better than nothing. But it’s not autonomous.

The Council Approach

Andrej Karpathy recently proposed something interesting: an LLM council.

The idea is simple. Run the same query through multiple different models: GPT, Claude, Gemini, Grok. Let them see each other’s responses. Have them evaluate and rank each other. Then use a “Chairman LLM” to synthesize the final answer.

What’s clever about this: models are surprisingly willing to admit when another model did better. They’ll look at competing responses and say “yeah, that one’s more complete than mine.”

Could this work for extraction tasks?

Maybe. If you run three different LLMs on the same invoice and they all return seven line items, you have more confidence than if just one model said it. If one model returns eight items and the others return seven, you know to flag it for review.

The hope is that errors reduce by a factor of n, where n is the number of models. If each model has a 3% error rate independently, and their errors aren’t correlated, running three models might drop you to sub-1% error rates.

The question is whether different models actually make different mistakes, or whether they’re all trained on similar enough data that they fail in the same ways.

I haven’t tested this yet. But it’s the most interesting verification approach I’ve seen.

The Cost Equation Nobody Talks About

Running multiple models isn’t free.

If it costs $0.01 to have one LLM process an invoice and $5 to have a human review it, where’s the breakpoint?

Running three models costs $0.03. Still way cheaper than human review. So economically, it makes sense even if it only marginally improves accuracy.

But here’s the thing: you’re not choosing between three models or human review. You’re choosing between three models that might still need human review versus one model that definitely needs human review.

The real win would be if the council approach lets you confidently skip human review 95% of the time instead of 0% of the time.

That changes everything.

Where This Goes

We’ll get to autonomous agents. Not because the models will get perfect, but because we’ll solve verification.

Maybe it’s the council approach. Maybe it’s something else entirely, some clever ensemble method we haven’t thought of yet.

But right now, this is the bottleneck. Not capability. Detection.

The models are good enough to do the work. We just can’t tell when they’re lying.

Until we solve that, human-in-the-loop isn’t a feature. It’s a requirement.

And fully autonomous agents remain just out of reach.