The Trust Gap: Why Your AI Works But Nobody Uses It

Technical readiness is a milestone. Organisational trust is the destination.

My first sales order processing solution took eight weeks to build.

Now I can get to a working solution in three.

But my last project? Three weeks to build. Three months before anyone actually trusted it.

Three months of the client forwarding sales order emails to the agent, reviewing the output in their system, and only then releasing the order. Three months of a solution that worked sitting mostly unused.

That gap between technical readiness and organisational trust is the thing nobody budgets for.

The Pattern

Here’s what happens on almost every AI project I work on.

We build something. It processes sales orders, extracts invoice data, categorises transactions. It works. The accuracy is good. The demos impress stakeholders.

Then we deploy it. And nothing changes.

Operators still do manual checks. They still don’t trust the output. They still treat the AI like a suspicious colleague who might be lying.

The solution is ready. The organisation isn’t.

Why the Gap Exists

AI is probabilistic. Traditional software does what you tell it to do. AI does what it thinks you meant. That uncertainty is baked in, and people feel it even when they can’t articulate it.

Domain experts have years of intuition. They’ve seen the edge cases. They know which customers always have weird requirements, which suppliers send malformed invoices, which workflows break in Q4. They need to see the AI handle those situations before they trust it with the routine ones.

Operators fear replacement. Even when we’re explicit that the goal is augmentation, not elimination, the anxiety persists. They’re not being paid per order processed. They have no incentive to make themselves faster. And if they help the AI succeed, what happens to their job?

The solutions are never 100% accurate. Operators think they are 100% accurate. They’re not. Humans make errors constantly. But they believe they don’t. So when the AI makes a mistake they would have caught, it confirms their suspicion that the machine can’t be trusted.

One visible failure can undo weeks of progress. A single sales order booked incorrectly. Products sent to the wrong address. The wrong customer charged. If leadership sees it, if it creates a support ticket, if it costs real money, trust evaporates.

The Cost of Getting It Wrong

Not all mistakes are equal.

In finance, a mistake processing an invoice can cost thousands of euros. The tolerance for error is essentially zero. Every edge case matters. Every field must be verified.

In wholesale, sending an order to the wrong address costs tens of euros. Still bad, but recoverable. The threshold for acceptable risk is higher.

This is why the trust gap varies by industry. Finance is nit-picky because finance has to be. Wholesale can move faster because the consequences of failure are smaller.

When I scope a project, I try to understand this early. What does a mistake actually cost? That answer determines how much evidence we need before anyone will let the AI operate autonomously.

The Staged Autonomy Model

Early on, we treated trust-building as something that would happen naturally. Deploy the solution, let people use it, and they’ll come around.

That doesn’t work.

Now we’re explicit. We walk clients through the stages from day one:

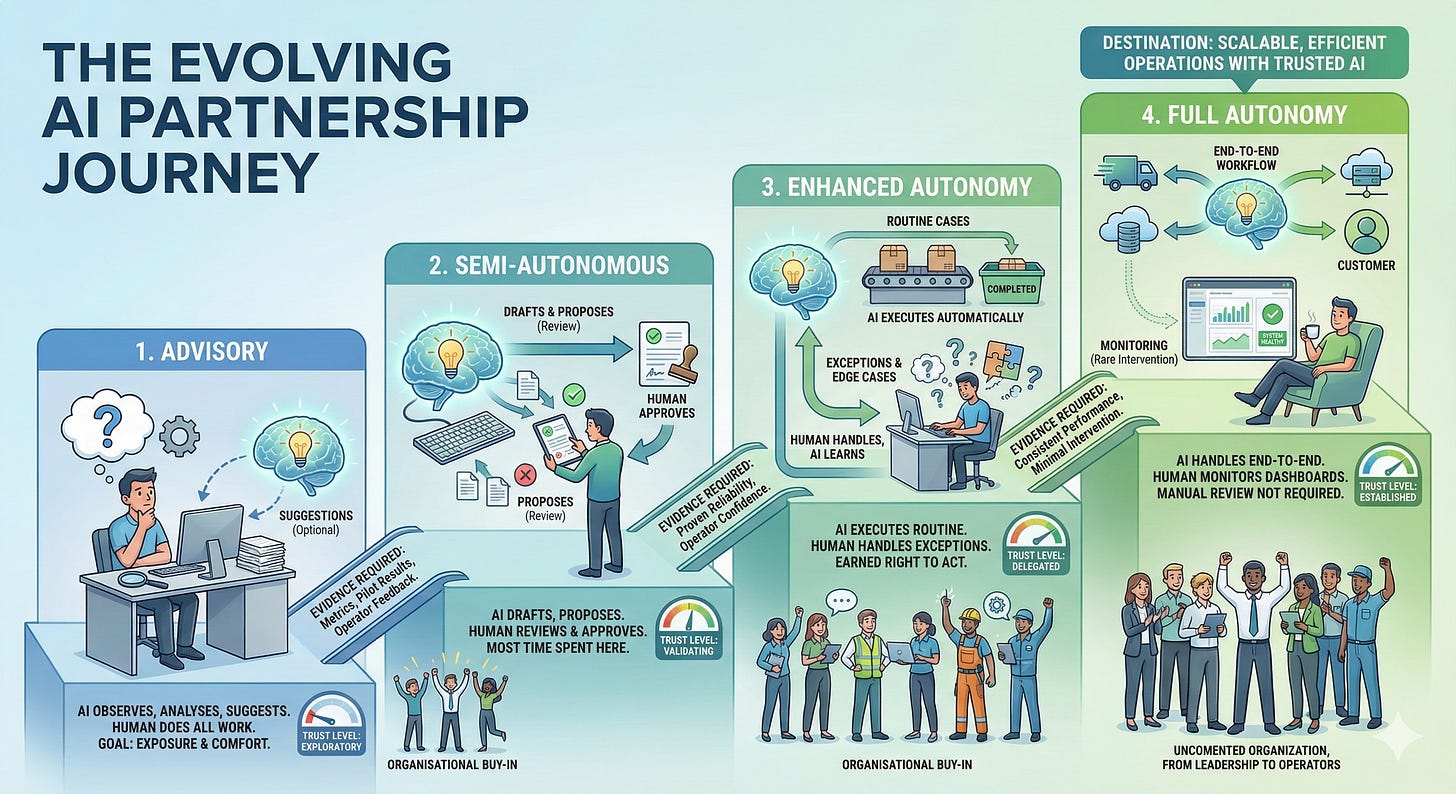

Advisory. The AI observes, analyses, suggests. Humans do all the work. The AI is a second opinion they can ignore. The goal is exposure. Getting operators comfortable with seeing AI output without any pressure to rely on it.

Semi-autonomous. The AI drafts outputs, proposes actions. Humans review and approve before anything happens. The AI does the work; humans verify it. This is where most projects spend the longest time.

Enhanced autonomy. The AI executes routine cases automatically. Humans handle exceptions and edge cases. The AI has earned the right to act on straightforward situations; humans still own anything complicated.

Full autonomy. The AI handles the workflow end-to-end. Humans monitor dashboards and intervene rarely. The AI has proven itself across enough scenarios that manual review is no longer required.

Each transition requires evidence. Metrics. Pilot results. Operator feedback. And it requires organisational buy-in. Not just from the sponsor who signed the contract, but from the people who actually use the system every day.

Moving Backwards Is Sometimes Necessary

We learned this the hard way.

On one project, we built an agent to help with capacity planning. Forecasting demand, allocating resources, flagging potential bottlenecks. We moved from semi-autonomous to full autonomous too fast. The accuracy was good but not good enough. Operators noticed. Forecasts were off. Resource allocations didn’t account for constraints they knew about but hadn’t documented. We’re talking about a few percent error rate, a small number in absolute terms, but visible.

We lost trust.

Here’s the thing: even in the semi-autonomous state, the solution was cutting planning time by 60%. That value was real. But by pushing too hard, we put that value at risk.

So we stepped back. Not all the way back. We didn’t return to advisory mode. We returned to semi-autonomous, communicated what we were adjusting and why, and started collecting feedback again.

It took time to regain confidence. But we did. The promise is still full autonomy. We’re just getting there at the right pace now.

Taking a step back doesn’t mean starting over. It means acknowledging reality and moving forward more carefully.

Getting Operators Involved Early

Almost always, sponsors want to move faster and operators are sceptical.

The sponsor signed the contract. They’ve bought the vision. They want ROI. They’re asking when we can remove the human from the loop.

The operators are the loop. They’re the ones who have to trust the AI with their work, their reputation, their job security.

This tension is predictable, and the solution is getting operators involved as early as possible.

What does that look like? They use and test every version of the product. They provide feedback constantly. They’re not observers watching from the sidelines. They’re participants shaping how the solution evolves.

When operators are reserved from the start, when they’ve decided they don’t want the project to succeed, trust-building takes much longer. It’s not a lost cause, but it requires more patience, more wins, more evidence.

When operators are engaged from day one, they become invested in the solution’s success. Their feedback improves the accuracy. Their buy-in smooths the transition. They stop seeing the AI as a threat and start seeing it as a tool they helped build.

What Accelerates Trust

Transparency. Show the AI’s reasoning, not just its answer. Let operators see why it made a decision. Black boxes breed suspicion.

Constant communication. I can’t overstate this. Updates. Check-ins. Feedback loops. Silence is the enemy of trust.

Reaction to every action. This is subtle but critical. If an operator does something, whether that’s submitting feedback, flagging an error, or asking a question, they need a response. If they forward an email to an agent and nothing happens, trust erodes immediately. A single unanswered email can be enough to make them question the entire system.

Quick feedback loops. Daily iterations beat monthly releases. When operators see their feedback implemented quickly, they believe the system is actually learning.

Starting with low-stakes tasks. Build confidence on easy wins before tackling the workflows where mistakes are expensive.

What Kills Trust

One visible failure in front of leadership. Everything else can be recovered from. This one leaves scars.

Lack of explainability. When the AI gets something wrong and nobody can explain why, operators assume it will happen again randomly.

No clear escalation path. What happens when the AI is wrong? If the answer is unclear, people won’t rely on the AI for anything important.

Overpromising in sales. When expectations are set too high, even good performance feels like failure.

The Misconception That Blocks Progress

The biggest misconception clients have isn’t about timelines. It’s that the agent will learn on its own.

They’ve absorbed the narrative that AI improves with use. That it watches, adapts, gets smarter over time. That’s the promise of the technology, eventually.

We’re not there yet.

Today, improvement requires feedback, retraining, prompt adjustments, new examples. The agent doesn’t wake up smarter tomorrow just because it processed a hundred orders today.

When I explain this, I emphasise that we’re architecting solutions to benefit from autonomous learning once the technology is ready. The foundation is being built. But right now, improvement is manual, deliberate, and dependent on human input.

Setting this expectation early prevents frustration later.

The Slider

When clients ask how long it will take, I explain the slider.

On one end: speed. We deploy fast, iterate fast, move to autonomy fast.

On the other end: quality. We test thoroughly, gather feedback extensively, transition carefully.

You can’t maximise both. And here’s why it matters: we get one chance, maybe two, to earn operator trust. If we push them to use something before it’s ready, and it fails, we’ve burned that chance. Regaining trust takes longer than building it in the first place.

Most sponsors understand this. They know the risk of going too fast. They’d rather take an extra month than lose six months rebuilding confidence.

The slider isn’t about our preferences. It’s about what the deployment can survive.

The Destination

Full autonomy is possible. We’re moving clients there. But it’s earned, not given.

The companies that get there don’t skip stages. They build trust methodically. They expand autonomy gradually. They maintain human oversight until the evidence says they don’t need it.

This connects to a problem I wrote about before: we can’t fully verify LLM outputs yet. We can’t reliably detect when the AI is wrong. Until we solve that, staged autonomy isn’t just good practice. It’s necessary.

The trust gap isn’t a bug. It’s a feature.

It forces us to build systems that are actually reliable, not just systems that look impressive in demos. It forces us to earn the right to remove humans from the loop instead of assuming that right.

Three weeks to build. Three months to trust.

Plan for both.